What If You’re Automating the Wrong Parts of the Business?

The future of work depends on what stays human — and what shouldn’t.

👋🏽 Hey, it’s Anita. Welcome to AI@Scale — I write for leaders trying to make AI work at scale, where the hardest part isn’t the tech, it’s the humans.

📊 Its Q4! Want the AI training ROI calculator I use with my clients? Download it here!

It’s been a busy, busy few weeks. In addition to the holidays, I had the incredible privilege of supporting Rippleworks in their Leaders’ Studio.

If you’re not familiar, Rippleworks is on a mission to accelerate the impact of social ventures around the world by connecting them with senior leaders who volunteer their time and expertise. These leaders help founders navigate the operational realities of scaling—everything from talent to technology to, absolutely no surprise to anyone… AI.

In this month’s session, I worked with their HR and People leaders to break down what responsible AI adoption actually looks like: what must stay human, what should be augmented, and what’s ready for autonomy. The conversations were sharp and honest (and reinforced how urgent and misunderstood this topic still is!)

Which brings me to today’s piece!

Performance season always brings a flurry of conversations around growth, readiness, and the future of roles. But this year, those conversations sit on top of a harder, often unspoken layer: AI is reshaping the work inside those roles faster than most organizations are willing to acknowledge.

Not because AI is replacing everyone.

Not because the workforce is resistant.

But because we’re still asking the wrong question.

We’re still asking:

“Will AI take your job?”

When we should be asking:

“Which parts of your work must remain human, and which parts absolutely shouldn’t?”

If we keep evaluating people against work that’s already shifting, we’re not managing performance—we’re managing to a version of the work that no longer exists.

Human capability isn’t the thing being disrupted right now.

Work composition is.

And this is where most organizations are misaligned.

They automate whatever is most visible and keep humans responsible for whatever feels risky. This year, many organizations have focused on reassuring employees instead of involving them. So instead of having the real conversation, they retrofit AI into roles that were never designed for hybrid human–machine workflows.

If leaders want to take the fear out of AI, they have to replace it with clarity.

Not emotional clarity but operational clarity.

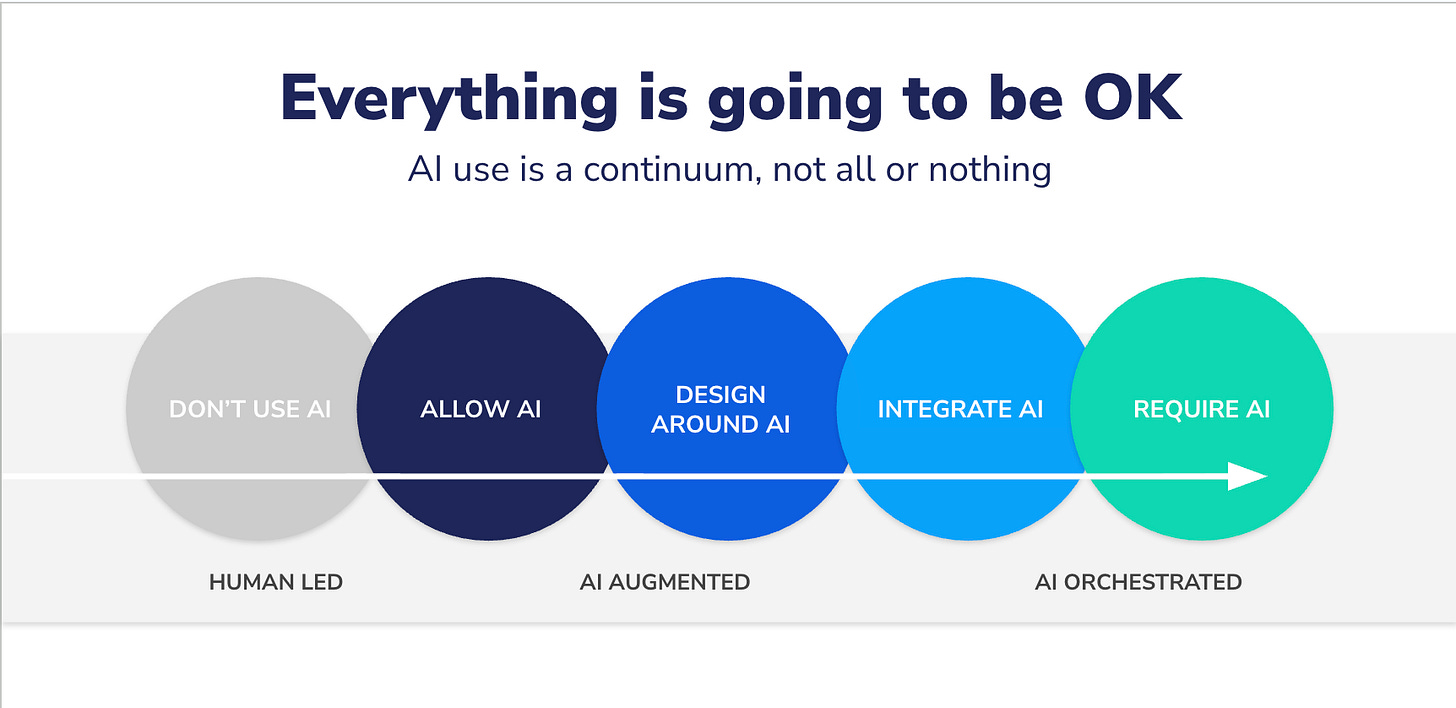

And that clarity begins with classifying work across the Human → AI Continuum:

The continuum isn’t about who uses AI and who doesn’t — it’s a way of understanding how work actually shifts. Some work requires human judgment and must stay human. Some work is better when humans and AI share responsibility. And some work is structured enough that it should move toward autonomy. Classifying work this way gives teams a clearer path forward and removes the fear that everything is changing at once.

The continuum comes down to three core components. Here’s how to think about each one…

1. Human-Led Work

Judgment. Interpretation. Strategic thinking. Decisions with imperfect inputs.

This includes things like succession planning, performance calibration, high-context customer escalations, and narrative development.

This work stays human not because AI is incapable, but because the organization must trust the human accountable for the outcome. Accountability is a design choice, not a capability debate.

2. AI-Augmented Work

Pattern detection. Synthesis. Scenario generation. Analysis at scale.

Think personalization, skills mapping, content clustering, workflow diagnostics.

This zone is the largest in the enterprise—and the most misunderstood.

It’s not fully human. It’s not fully AI.

It’s a designed collaboration, where humans provide judgment and AI provides breadth, speed, and precision.

This is also where most of the fear sits, simply because no one has explained what “human-in-the-loop” actually looks like.

3. Autonomous Work

Structured processes with known rules and predictable outcomes.

Data routing. Tagging. Quality checks. Version control. Tier-zero response flows.

This is work humans should not be doing, not because it’s beneath them, but because it introduces inconsistency, delay, and operational drag.

Automation here isn’t a threat.

It’s actually just responsible design.

Let’s Look At an Example

From my vantage point in professional services, this is the most tangible example. Think about how teams manage data across CX, learning, operations, analytics, and services.

Human still spend enormous amounts of time on:

tagging

routing

cleaning

assembling reports

reconciling spreadsheets

creating dashboards manually

None of this requires judgment.

It requires precision and repeatability (things AI does exceptionally well).

but what about interpreting those insights?

or deciding what matters and why?

and choosing the action that aligns to strategy?

and how about communicating the narrative behind the data?

That is human-led work. That is what people should be evaluated on.

Yet during performance season, we tend to talk about building “strategic capability” while anchoring people to tasks that make strategic capability impossible.

We cannot ask people to move up the value chain while burying them under work that should have been automated years ago.

The Hard Truth

If you automate the wrong thing, the system breaks.

If you fail to automate the right things, the people break.

And if you try to automate everything, you recreate the same workflow just with far more fragility.

Performance season is the moment to reset the narrative.

Not to soothe people, but to involve them. So the conversations must change to:

Here’s the part of your work AI will take—and that’s progress.

Here’s the part of your work that will expand—because judgment becomes more valuable, not less.

Here’s where your role is going—and here’s how we redesign it together.

The organizations that navigate AI well will be the ones who treat employees as partners in redesigning the work.

Fear dissolves when the work is clear.

And clarity is what accelerates human performance.

Everything will be alright—

but only if we stop pretending that reassurance is a strategy.